转自Appium pro

In a previous edition of Appium Pro, I discussed mobile performance and UX testing at a conceptual level. In this edition, let’s take a look at some pointers for tracking the various metrics we saw earlier. There are way too many metric to consider each in detail, so we’ll just outline a solution in each case; stay tuned for future articles with fully-baked examples (and let me know which metrics you’re most interested in reading about!) In this set of pointers, I’m going to focus on free and open source approaches, saving a deeper look at paid services for a follow-up article.

Time-to-interactivity (TTI)

This metric measures the time elapsed from when the user launches your app till it’s actually usable. A first-pass solution to measuring TTI would be throwing some timestamps into your testcode before starting an Appium session and after the session is fully started. However, this approach falls short in several ways:

- You’re measuring Appium startup time in addition to app startup time

- Just because you have an Appium session loaded doesn’t mean your app is actually interactable yet.

As a second pass, we can try an approach like we did in Edition 97, where we delayed actually launching the app until after Appium startup itself was complete. This gets us a lot closer to a solid metric, but is still not bulletproof, because measurements are contingent on a find-element poll, which might not occur at exactly the moment the app is fully loaded.

DNS resolution

How on earth can you test that your mobile app API calls are hitting the appropriate server? Obviously checking this assumes that (a) you know the domains the app is trying to hit, and (b) you know the IP addresses it’s supposed to resolve to (for its region, or whatever). The only obvious and free solution I came up with to this problem also assumes (c) you are running on emulators and simulators (i.e., using the same network as your test machine). When you’re running emulators and simulators, the network used by the device under test is in fact your host machine’s network, and you can use tcpdump to examine all network traffic leaving your machine, including DNS resolution requests. Of course, looking at all TCP traffic will be a bit of a firehose, so you can restrict tcpdump 's output to requests to nameservers (typically running on port 53):

sudo tcpdump udp port 53

The output of DNS requests will look something like:

20:09:54.099145 IP 10.252.121.60.62613 > resolver.qwest.net.domain: 52745+ A? play.google.com. (33)20:09:54.101718 IP resolver.qwest.net.domain > 10.252.121.60.62613: 52745 1/0/0 A 216.58.217.46 (49)

So it would be straightforward (if not easy) to write a script that runs tcpdump as a subprocess, scrapes output during the space of a mobile app call, and makes assertions about domain name resolution!

Unfortunately, this would not work on real devices. For real devices you’d need to set up some kind of DNS proxy and have your devices configured to use it for DNS resolution, so you can log or track data about DNS requests.

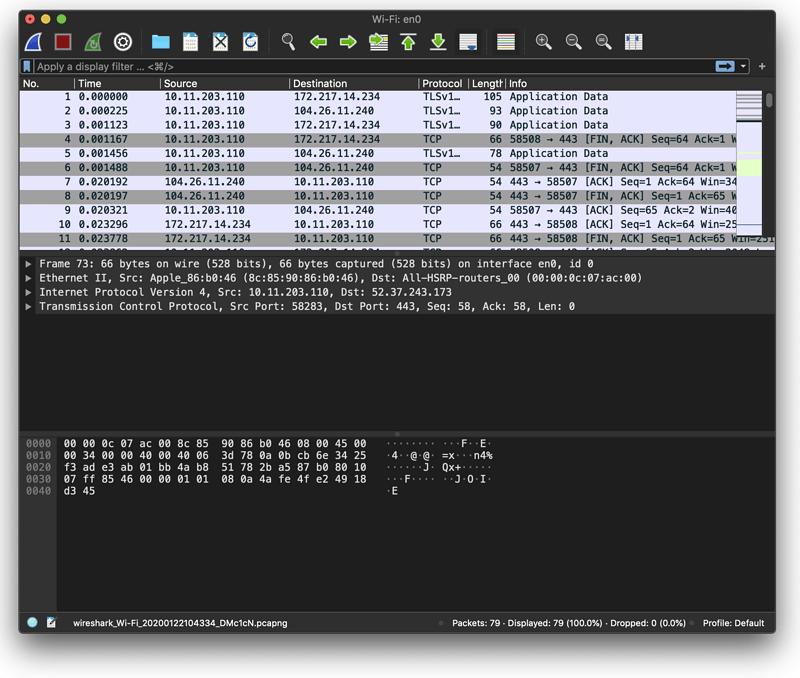

Time till 1st byte, TCP delay, TLS handshake time

These low-level network metrics are best captured by a network analysis tool like Wireshark, which can listen to all TCP traffic that passes through a network interface, and provide more data than a simple tcpdump log. The data Wireshark captures is made available in a special .pcap file, which has become a standard for representing network data.

Note that this means you could also use Wireshark for DNS request examination!

Using a .pcap file (which stands for “packet capture” because it represents every single packet that traverses a network interface), you can get a ton of information about network requests, including the time they took. .pcap files can be parsed in pretty much any language, for example with the jnetpcap library for Java. Parsing the file enables you to determine all kinds of facts about network requests, for example how long they took.

Wireshark is designed as a GUI tool, which limits is utility in CI environments, though it can be launched from the CLI in an auto-record mode, and directed to write the resulting capture file to any location.

And finally, any method like this will again only work on virtual devices, unless you’re somehow able to route real device traffic through a router which is itself running Wireshark!

Other network analysis approaches

So far I’ve tried to recommend very low-level and general tools, but there are also some other options. Some of these are specific to Android:

- Advanced Network Profiling - a library produced by Google that you build into your app, which can capture and output network data

- Stetho - a similar library from Facebook that provides a web devtools-like environment for inspecting your app’s network requests. Also requires building into the app.

Cross-platform solutions would include the use of man-in-the-middle proxies:

- Postman - a GUI-based tool that can provide network analysis information when you proxy mobile app requests through it.

- mitmproxy - an open-source CLI-based tool which allows proxying of HTTP/HTTPS traffic

Proxy solutions require configuring the device under test with the correct proxy settings, and trusting the proxy as a certificate authority for HTTPS requests to be visible for analysis. To learn more about how to put all this together, you can check out the Appium Pro articles on capturing iOS simulator network traffic, capturing Android emulator traffic, and capturing network traffic with Java in your test scripts.

CPU utilization, memory consumption, etc…

These low-level device metrics can be retrieved in a minimal way using Appium itself. Check out these earlier Appium Pro articles for more info (note that with iOS especially, programmatically using retrieved performance info is not straightforward at all):

Video quality, blank screen time, animation time

These last metrics are particularly hard to determine using free and open source tools. Video quality is a subjective measurement and to determine it in an automated fashion would require (a) the ability to capture video from the screen (which Appium can do via its startScreenRecording command), and (b) some machine learning model which could take a video as input and provide a quality judgment as output.

I could not find a good open source tool to measure video quality (though there is a paper outlining how such a tool might be developed).

Similarly, for blank screen time, the best approach would involve training some kind of machine learning model to recognize screens with no elements, though an easy approximation could be made by using Appium and logging how much time elapses with a typical element wait.

Conclusion

In general, the world of free and open source network and performance testing is a bit of a rough and tumble place. In some cases, the tools exist, but are not well-integrated with the ecosystem a tester is used to working with. In other cases, we are limited to certain platforms or devices. In yet other cases, the best tools out there today are probably not free and open source.

For that reason, stay tuned, because we’re going to consider some paid tools and services in a future edition of Appium Pro, so you have the best understanding of the landscape and can make good decisions about the costs and benefits of adopting various approaches to performance testing of mobile apps.