很多同学不太懂scala的编译,外加各种海外依赖导致diffy很难编译,所以学院提供了一个预编译包给大家。

下载地址

1.0.0版本:

http://download.testing-studio.com/diffy/

Diffy

Status

Diffy is used in production at:

- Mixpanel

- Airbnb (Scalabity) (Migration)

- Baidu

- Bytedance

and blogged about by cloud infrastructure providers like:

If your organization is using Diffy, consider adding a link here and sending us a pull request!

Diffy is being actively developed and maintained by the engineering team at Sn126.

Feel free to contact us via linkedin, gitter or twitter.

What is Diffy?

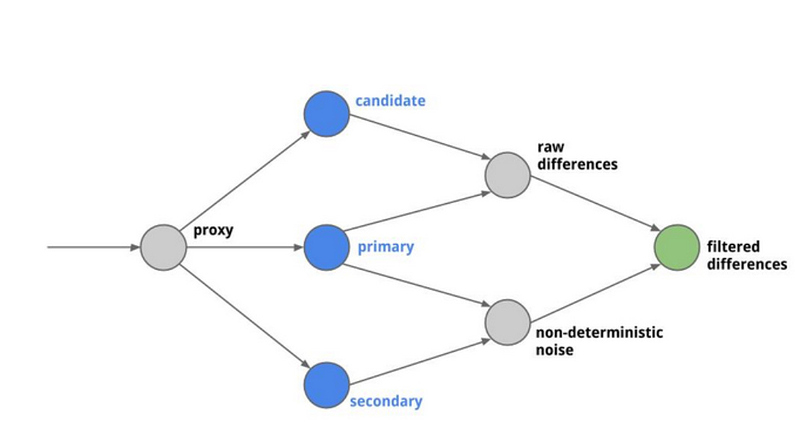

Diffy finds potential bugs in your service using running instances of your new code and your old

code side by side. Diffy behaves as a proxy and multicasts whatever requests it receives to each of

the running instances. It then compares the responses, and reports any regressions that may surface

from those comparisons. The premise for Diffy is that if two implementations of the service return

“similar” responses for a sufficiently large and diverse set of requests, then the two

implementations can be treated as equivalent and the newer implementation is regression-free.

How does Diffy work?

Diffy acts as a proxy that accepts requests drawn from any source that you provide and multicasts

each of those requests to three different service instances:

- A candidate instance running your new code

- A primary instance running your last known-good code

- A secondary instance running the same known-good code as the primary instance

As Diffy receives a request, it is multicast and sent to your candidate, primary, and secondary

instances. When those services send responses back, Diffy compares those responses and looks for two

things:

- Raw differences observed between the candidate and primary instances.

- Non-deterministic noise observed between the primary and secondary instances. Since both of these

instances are running known-good code, you should expect responses to be in agreement. If not,

your service may have non-deterministic behavior, which is to be expected.

Diffy measures how often primary and secondary disagree with each other vs. how often primary and

candidate disagree with each other. If these measurements are roughly the same, then Diffy

determines that there is nothing wrong and that the error can be ignored.

Getting started

If you are new to Diffy, please refer to our Quickstart guide.

Upgrade to Isotope

- Login to isotope.

- Click on services tab to create the service you want to test.

- Download the resulting

local.isotopefile. - Deploy Diffy with the

isotope.configflag pointing to the location oflocal.isotope:java -jar ./target/scala-2.12/diffy-server.jar \ -candidate='localhost:9200' \ -master.primary='localhost:9000' \ -master.secondary='localhost:9100' \ -service.protocol='http' \ -serviceName='ExampleService' \ -summary.delay='1' \ -summary.email='isotope@diffy.ai' \ -proxy.port=:8880 \ -admin.port=:8881 \ -http.port=:8888 \ -isotope.config='/path/to/local.isotope' - Send some traffic to your deployed Diffy instance.

- Go the analysis tab to see your latest and historical results.

Support

Please reach out to isotope@sn126.com for support. We look forward to hearing from you.

Getting started

Running the example

The example.sh script included here builds and launches example servers as well as a diffy instance. Verify

that the following ports are available (9000, 9100, 9200, 8880, 8881, & 8888) and run ./example/run.sh start.

Once your local Diffy instance is deployed, you send it a few requests by running ./example/traffic.sh.

You can now go to your browser at

http://localhost:8888 to see what the differences across our example instances look like.

Digging deeper

That was cool but now you want to compare old and new versions of your own service. Here’s how you can

start using Diffy to compare three instances of your service:

-

Deploy your old code to

localhost:9990. This is your primary. -

Deploy your old code to

localhost:9991. This is your secondary. -

Deploy your new code to

localhost:9992. This is your candidate. -

Download the latest Diffy binary from maven central or build your own from the code using

./sbt assembly. -

Run the Diffy jar with following command line arguments:

java -jar diffy-server.jar \ -candidate=localhost:9992 \ -master.primary=localhost:9990 \ -master.secondary=localhost:9991 \ -service.protocol=http \ -serviceName=Fancy-Service \ -proxy.port=:8880 \ -admin.port=:8881 \ -http.port=:8888 \ -rootUrl="localhost:8888" \ -summary.email="info@diffy.ai" \ -summary.delay="5" -

Send a few test requests to your Diffy instance on its proxy port:

curl localhost:8880/your/application/route?with=queryparams -

Watch the differences show up in your browser at http://localhost:8888.

-

Note that after

summary.delayminutes, your Diffy instance will email a summary report to yoursummary.emailaddress.

NOTE: By default the names of the resources in the UI are fetched from the Canonical-Resource header in each

request. However this can be configured at boot with a static line, example:

-resource.mapping='/foo/:param;f,/b/*;b,/ab/*/g;g,/z/*/x/*/p;p' \

In the snippet above the configuration form is: <pattern>;<resource-name>[,<pattern>;<resource-name>]*

The first matching configuration will be found

Using Diffy with Docker

You can pull the official docker image with docker pull diffy/diffy

And run it with

docker run -d --name diffy-01 \

-p 8880:8880 -p 8881:8881 -p 8888:8888 \

diffy/diffy \

-candidate=localhost:9992 \

-master.primary=localhost:9990 \

-master.secondary=localhost:9991 \

-service.protocol=http \

-serviceName="Tier-Service" \

-proxy.port=:8880 \

-admin.port=:8881 \

-http.port=:8888 \

-rootUrl=localhost:8888 \

-summary.email="docker@diffy.ai" \

-summary.delay="5"

You should now be able to point to:

- http://localhost:8888 to see the web interface

- http://localhost:8881/admin for admin console

- Use port 8880 to make the API requests

NOTE: You can pull the sample service and deploy the production (primary, secondary) and candidate tags to start playing with diffy right away.

You can always build the image from source with docker build -t diffy .

FAQ’s

For safety reasons POST, PUT, DELETE are ignored by default . Add -allowHttpSideEffects=true to your command line arguments to enable these verbs.

HTTPS

If you are trying to run Diffy over a HTTPS API, the config required is:

-service.protocol=https

And in case of the HTTPS port be different than 443:

-https.port=123